A new wrist-worn device developed by MIT engineers allows users to intuitively control robotic hands – and even digital interfaces – by simply moving their own hands. The technology uses ultrasound imaging to capture the minute movements of wrist muscles and tendons, then translates those movements into commands for a robotic counterpart in real time.

How it Works: Ultrasound as a Control Interface

The core of the system is a small “ultrasound sticker” placed on the wrist. This sticker doesn’t just measure surface motion; it sees beneath the skin, imaging the interplay of muscles, tendons, and ligaments. The resulting stream of ultrasound images feeds into an AI algorithm trained to recognize how these internal movements correlate to specific hand gestures.

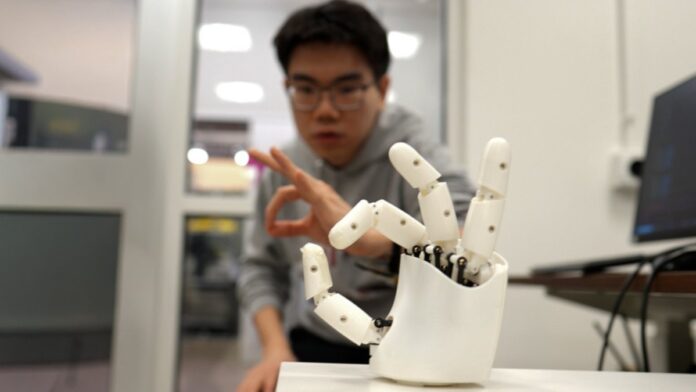

This is a significant step forward because robots have long struggled with the dexterity and nuance of human hands. Tasks like peeling a banana without crushing it remain challenging for machines, due to the difficulty in replicating the complex coordination of our own wrists and fingers. The MIT device bypasses this issue by directly capturing that coordination from a human user.

Real-World Applications and Testing

In tests, volunteers wearing the wristband were able to direct a robotic hand to perform tasks like grabbing tennis balls, making sign language gestures, and even playing piano notes. The system is precise enough to distinguish all 26 letters of American Sign Language and accurately translate gestures – such as a pinch – into digital commands (like a zoom function).

The implications extend beyond robotics. The team believes this technology could replace traditional hand-tracking in virtual and augmented reality, offering a more natural and immersive control method. Imagine interacting with VR environments without physical controllers, just by moving your hands.

Future Development and Scaling

Currently, the device is still bulky, resembling a “cyberpunk gauntlet” rather than a sleek smartwatch. The MIT team is working to miniaturize the electronics. They also plan to expand their AI training dataset to include a wider range of hand sizes and shapes, ensuring broader usability.

“We think these wearable ultrasound bands can provide intuitive and versatile controls for virtual reality and robotic hands,” says Xuanhe Zhao, a mechanical engineering professor at MIT.

Ultimately, the goal is to create a user-friendly wearable that can remotely control robots with the same ease as moving one’s own fingers. This technology could revolutionize how humans interact with machines, bridging the gap between our natural dexterity and the limitations of current robotic systems.